学习或开发阶段,我们通常在window环境下进行,因此需满足以下条件:

Windows 7 and greater;

Windows 10 or greater recommended;

Windows Server 2008 r2 and greater;

准备一个python环境,需满足以下条件:

Python 3.8-3.11(支持);

Python 2.x(不支持);

正式安装pytorch执行如下命令(默认最新版):

pip3 install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118

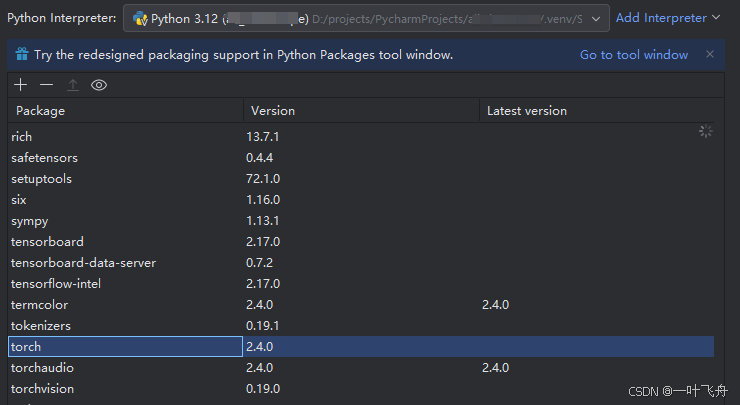

安装successful后,可看到如下界面(来自pycharm):

博主拿GPT作为示例(来自官方),新建一个python文件并取名为hello_GPT2.py,目的是完成gpt2模型的调用,下面是源码 :

from transformers import GPT2LMHeadModel, GPT2Tokenizer

# 指定模型名称

model_name = 'gpt2'

# 加载模型和分词器

tokenizer = GPT2Tokenizer.from_pretrained(model_name)

model = GPT2LMHeadModel.from_pretrained(model_name)

# 输入文本

input_text = "Once upon a time"

# 对输入文本进行分词

inputs = tokenizer.encode(input_text, return_tensors='pt')

# 生成文本

outputs = model.generate(

inputs,

max_length=100, # 生成文本的最大长度

num_return_sequences=1, # 生成序列的数量

temperature=0.7, # 温度控制生成的多样性,值越高,生成的文本越随机

top_k=50, # 控制生成的词汇范围,值越小,生成的文本越集中

top_p=0.9 # 采样阈值,控制生成的文本多样性

)

# 解码生成的文本

generated_text = tokenizer.decode(outputs[0], skip_special_tokens=True)

print("生成的文本:")

print(generated_text)

当我们执行上面的代码时,极容易遇到以下异常:

Traceback (most recent call last):

File "D:\projects\PycharmProjects\llm_openai_gpt\hello_GPT2.py", line 1, in <module>

from transformers import GPT2LMHeadModel, GPT2Tokenizer

File "D:\projects\PycharmProjects\llm_openai_gpt\.venv\Lib\site-packages\transformers\__init__.py", line 26, in <module>

from . import dependency_versions_check

File "D:\projects\PycharmProjects\llm_openai_gpt\.venv\Lib\site-packages\transformers\dependency_versions_check.py", line 16, in <module>

from .utils.versions import require_version, require_version_core

File "D:\projects\PycharmProjects\llm_openai_gpt\.venv\Lib\site-packages\transformers\utils\__init__.py", line 34, in <module>

from .generic import (

File "D:\projects\PycharmProjects\llm_openai_gpt\.venv\Lib\site-packages\transformers\utils\generic.py", line 462, in <module>

import torch.utils._pytree as _torch_pytree

File "D:\projects\PycharmProjects\llm_openai_gpt\.venv\Lib\site-packages\torch\__init__.py", line 148, in <module>

raise err

OSError: [WinError 126] 找不到指定的模块。 Error loading "D:\projects\PycharmProjects\llm_openai_gpt\.venv\Lib\site-packages\torch\lib\fbgemm.dll" or one of its dependencies.

关键之处:

OSError: [WinError 126] 找不到指定的模块。Error loading "D:\projects\PycharmProjects\llm_openai_gpt\.venv\Lib\site-packages\torch\lib\fbgemm.dll" or one of its dependencies.

根据提示,是因为fbgemm.dll缺少依赖,导致加载异常,所以直接办法去找依赖文件,博主这里给出一个解决的办法:

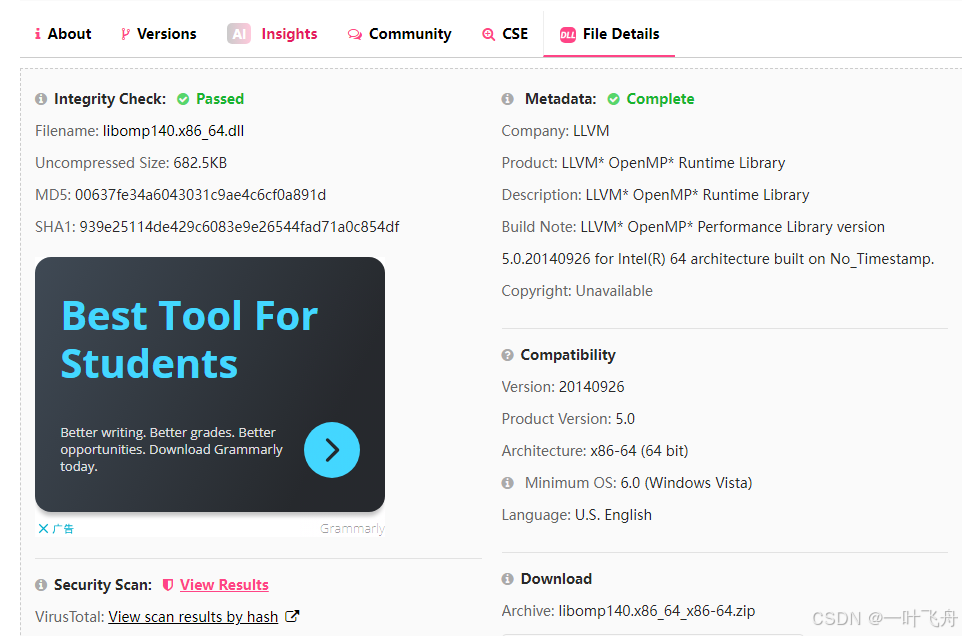

点击 dllme.com后,可看到如下页面:

点击右下角,下载 libomp140.x86_64_x86-64.zip。

将zip解压后,有一个文件:libomp140.x86_64.dll,转移至 Windows\System32 目录下,如存在可覆盖。

完成后,可顺利排除该异常。

该文用于解决PyTorch2.4安装后,出现了 OSError: [WinError 126] 找不到指定的模块,Error loading "PATH\torch\lib\fbgemm.dll" or one of its dependencies.的问题,如存在其他异常,还需进一步探索,如有疑问,欢迎指正!

以上就是PyTorch中loading fbgemm.dll异常的解决办法的详细内容,更多关于PyTorch loading fbgemm.dll异常的资料请关注插件窝其它相关文章!